The By Question metric evaluates how well agents answer specific questions during interactions. You can apply it to all conversations (static) or use it selectively in trigger-based scenarios (dynamic). It supports focused feedback, targeted coaching, and continuous improvement.Documentation Index

Fetch the complete documentation index at: https://koreai.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

What It Offers

- Standardized quality assessment framework

- Customizable evaluation criteria based on business needs

- Multi-language support for global operations

- Automated evaluation suggestions through AI

When to Use This Metric

| Use Case | Description |

|---|---|

| Quality Assurance | Systematically evaluate agent adherence to protocols or expected responses |

| Training Assessment | Measure how well agents follow prescribed interaction patterns |

| Compliance Monitoring | Verify how agents deliver critical information (disclaimers, privacy policies) |

| Performance Standardization | Apply consistent evaluation criteria across agents and interactions |

How It Works

The metric uses two adherence approaches:Static Adherence

- Evaluates agent responses across all conversations with no conditional requirements.

- Best for universal standards like greetings or mandatory procedures.

- No trigger conditions required.

Dynamic Adherence

- Evaluates agent responses only when specific triggers occur.

- Uses customer or agent utterances as triggers.

- Best for conditional scenarios requiring specific responses in certain contexts.

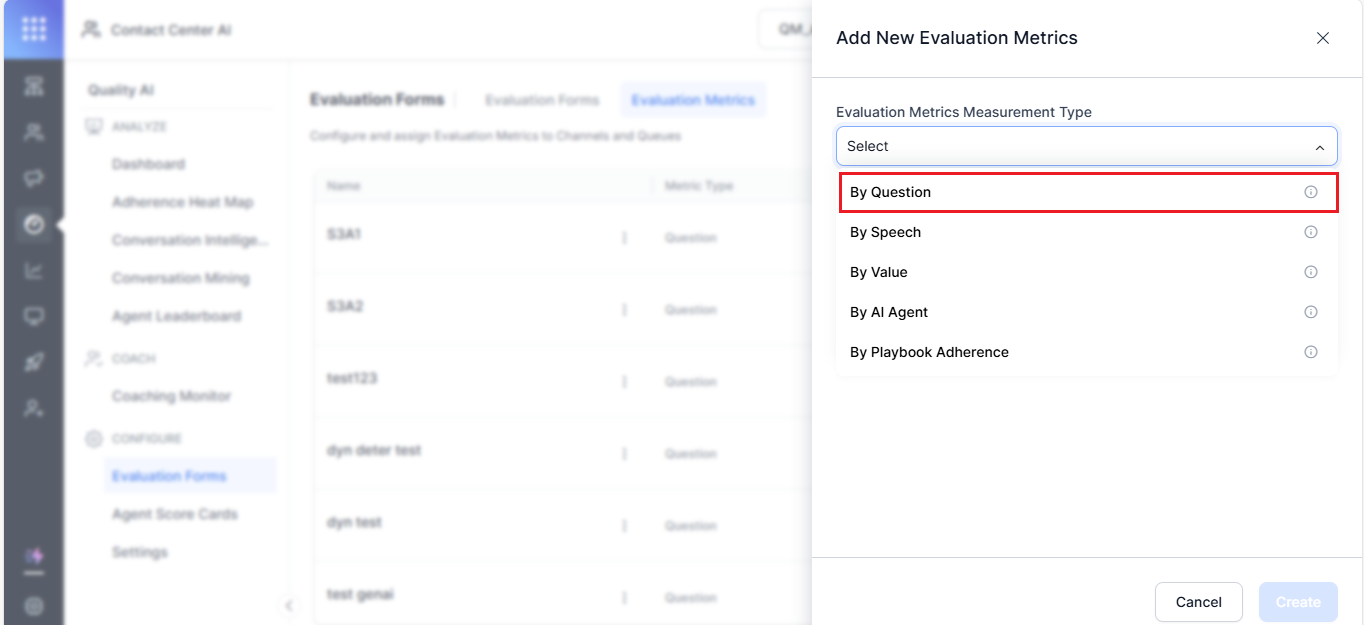

Configure By Question Metric

- Navigate to Quality AI > Configure > Evaluation Forms > Evaluation Metrics.

- Select + New Evaluation Metric.

-

From the Evaluation Metrics Measurement Type dropdown, select By Question.

-

Enter a descriptive Name (for example,

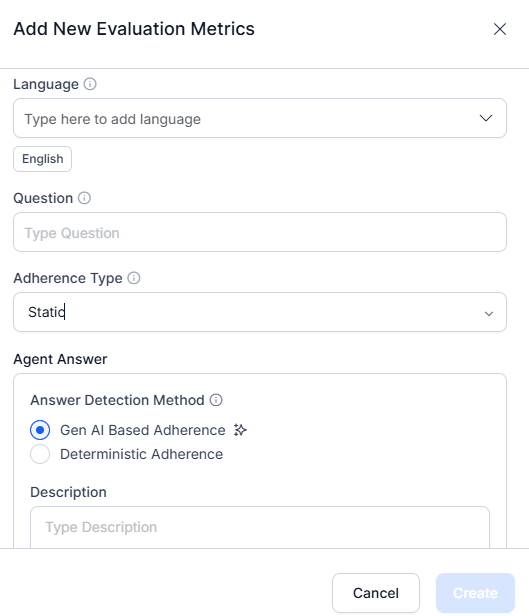

Agent's Warm Greeting). - Select a Language.

-

Enter an evaluation Question-a reference prompt for supervisors during audits and reviews.

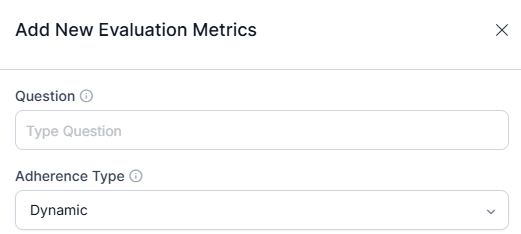

- Select the Adherence Type (Static or Dynamic).

For Static, configure at least one agent answer utterance. For Dynamic, configure at least one trigger and one agent answer utterance.

Static Adherence Configuration

Applies to all calls without any condition or trigger. Use for mandatory, universal compliance items like greeting scripts or regulatory disclaimers.- Define acceptable utterances for the queue.

- Set a similarity threshold to evaluate whether the agent’s response matches the predefined utterance.

- Configure at least one agent utterance template.

Dynamic Adherence Configuration

Evaluates adherence only when a configured trigger is detected. Use for context-sensitive checks.- Define at least one trigger (customer or agent utterance) and one acceptable agent response.

- Set the similarity threshold based on criticality:

- Lower threshold (~60%, yellow)-for casual interactions or greetings

- Higher threshold (~100%, green)-for critical topics such as legal disclaimers or privacy policies

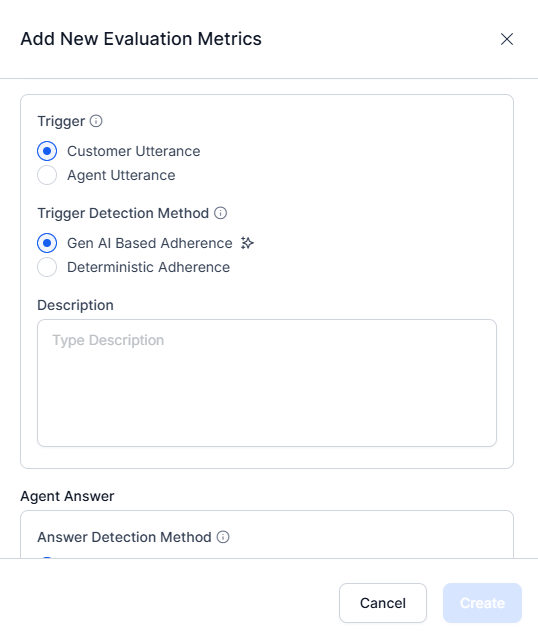

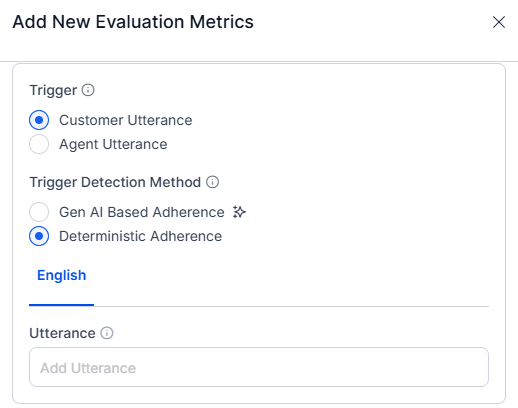

Trigger Configuration

Choose the utterance source that initiates the trigger:| Trigger Type | Description |

|---|---|

| Customer Utterance | Triggered by something the customer says (for example, I need a refund) |

| Agent Utterance | Triggered by something the agent says (for example, Can I transfer this call to support) |

Trigger Detection Method

| Method | Description |

|---|---|

| GenAI-Based | Uses LLMs to detect intent contextually. No sample utterances or thresholds required. |

| Deterministic | Relies on predefined sample utterances and semantic similarity matching. |

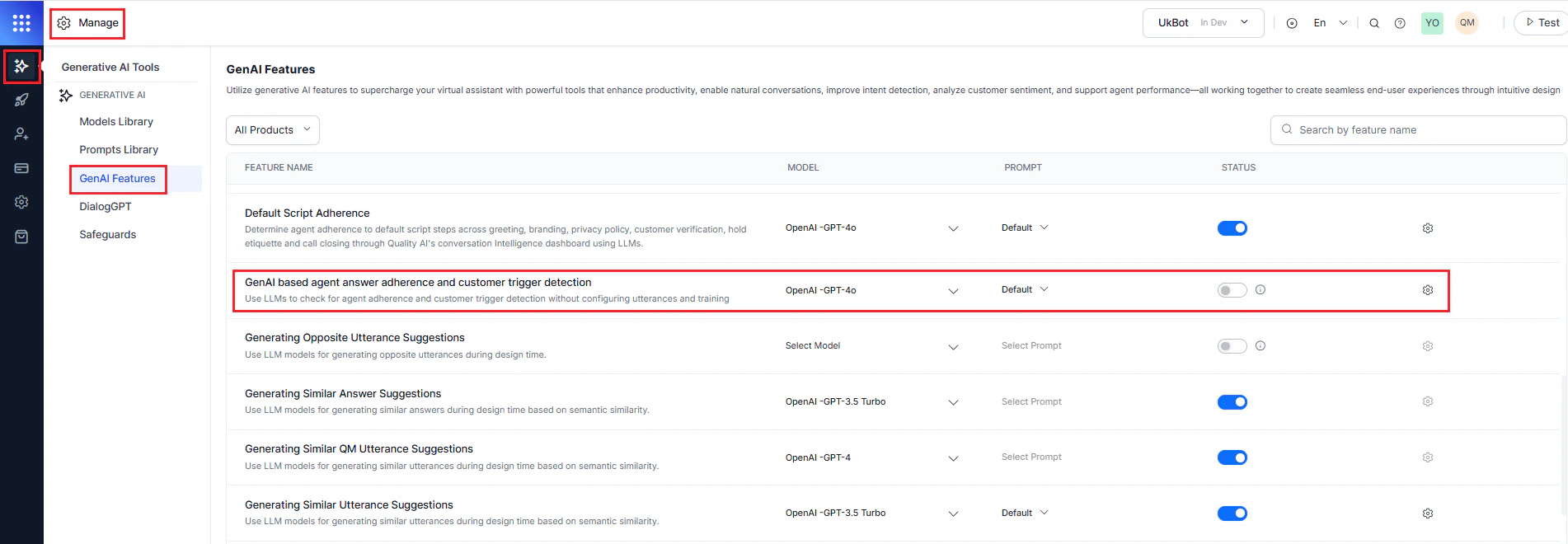

Enable GenAI-Based Features (Prerequisites)

To activate GenAI-based features:- Navigate to Manage > Generative AI > GenAI Features.

- Enable and publish the following features:

- GenAI-based agent answer adherence

- GenAI-based customer trigger detection

Agent Answer Configuration

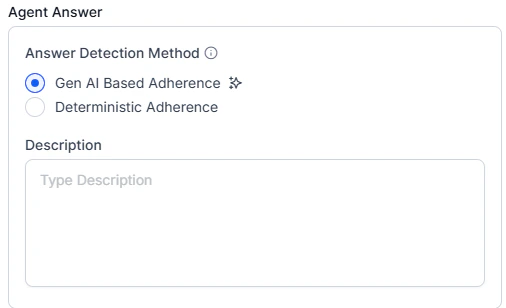

GenAI-Based Adherence

Uses LLMs to detect meaning, context, and intent evaluates whether the agent’s answer fulfills the intent, even if phrased differently.- Select GenAI-Based Adherence as the answer detection method.

-

Enter a Description explaining the metric’s intent.

Before using GenAI-based adherence, ensure supported models and the GenAI features (GenAI-based agent answer adherence and customer trigger detection) are enabled. No sample utterances or thresholds are required — LLMs evaluate adherence using zero-shot prompts. For effective prompt writing, see the Auto QA Prompting Guide.

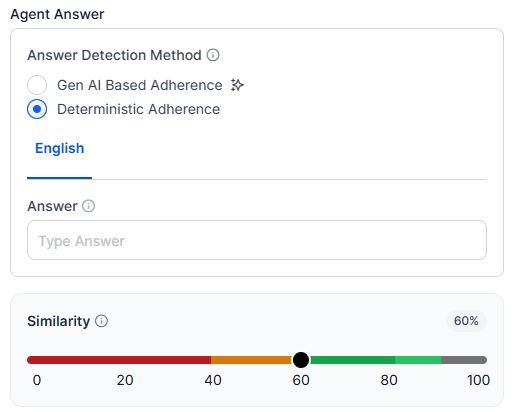

Deterministic Adherence

Evaluates responses based on semantic similarity to predefined reference utterances.- Select Deterministic Adherence.

- Define an Answer-acceptable utterances per queue. Use Generative AI to suggest similar variations.

- Set the Similarity threshold:

- Lower threshold (~60%) for soft skills like greetings and etiquette.

- Higher threshold (~100%) for compliance-critical statements (Policy, Privacy, Disclaimers).

Count Type Configuration

Choose how the metric evaluates adherence across the conversation:Entire Conversation

Evaluates adherence throughout the entire interaction at any point.

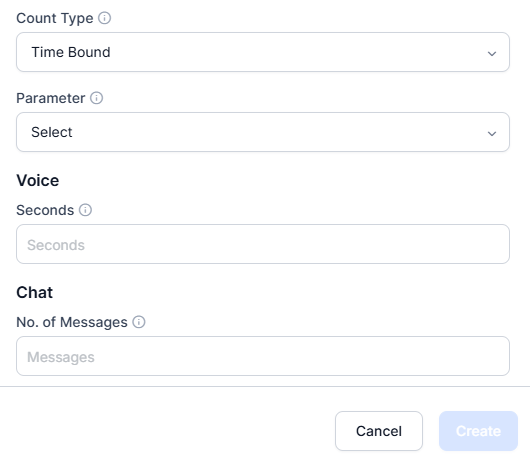

Time Bound

Focuses on specific timeframes-the first or last X seconds or messages.- Select Parameter-First Part or Last Part of the conversation.

- Set the evaluation window:

- Voice-enter number of seconds.

- Chat-enter number of messages.

- Select Create to save and activate the metric.

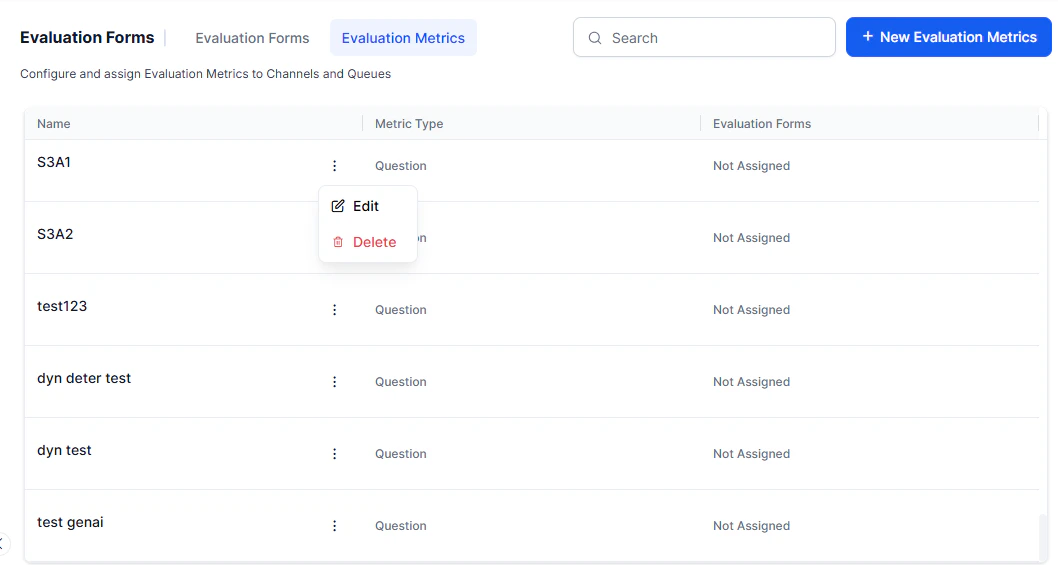

Edit or Delete By Question Metric

-

Select the metric from the By Question category.

- Choose an option: Edit to modify, or Delete to remove.

- Select Update to save changes.

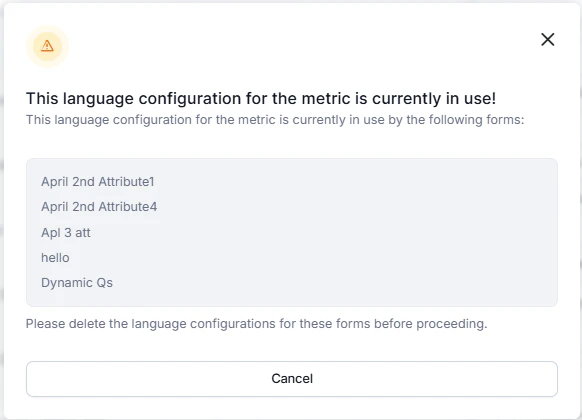

Language Dependency Warnings

- You can’t remove a language if any evaluation form or attribute uses it.

- Remove the language from all associated forms and attributes before modifying.

Delete Warnings

Before deleting a metric:- Remove it from all associated evaluation forms.

- If any attributes link to the metric, assign them to a different metric first.

- The system helps deletion only after all dependencies are resolved.