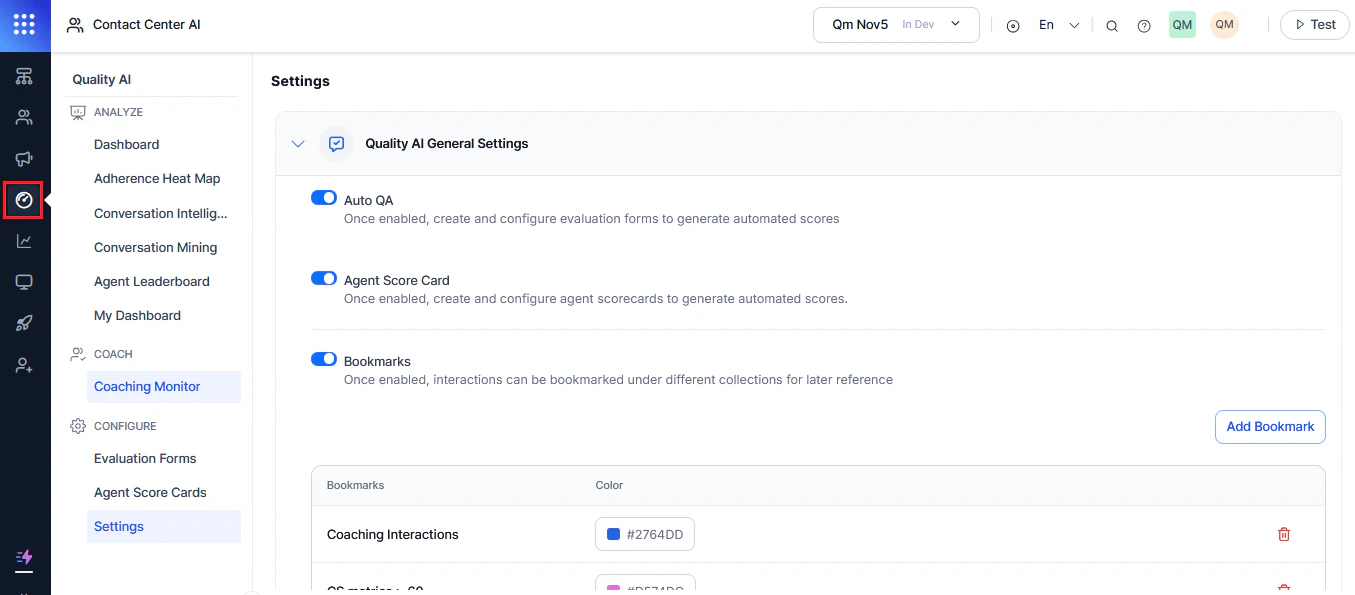

Quality AI General Settings lets you configure features that enhance agent performance and maintain compliance. You can manage Auto QA scoring, agent access to interactions, and auditor anonymity at the app level. Navigate to Quality AI > Configure > Settings > Quality AI General Settings.Documentation Index

Fetch the complete documentation index at: https://koreai.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

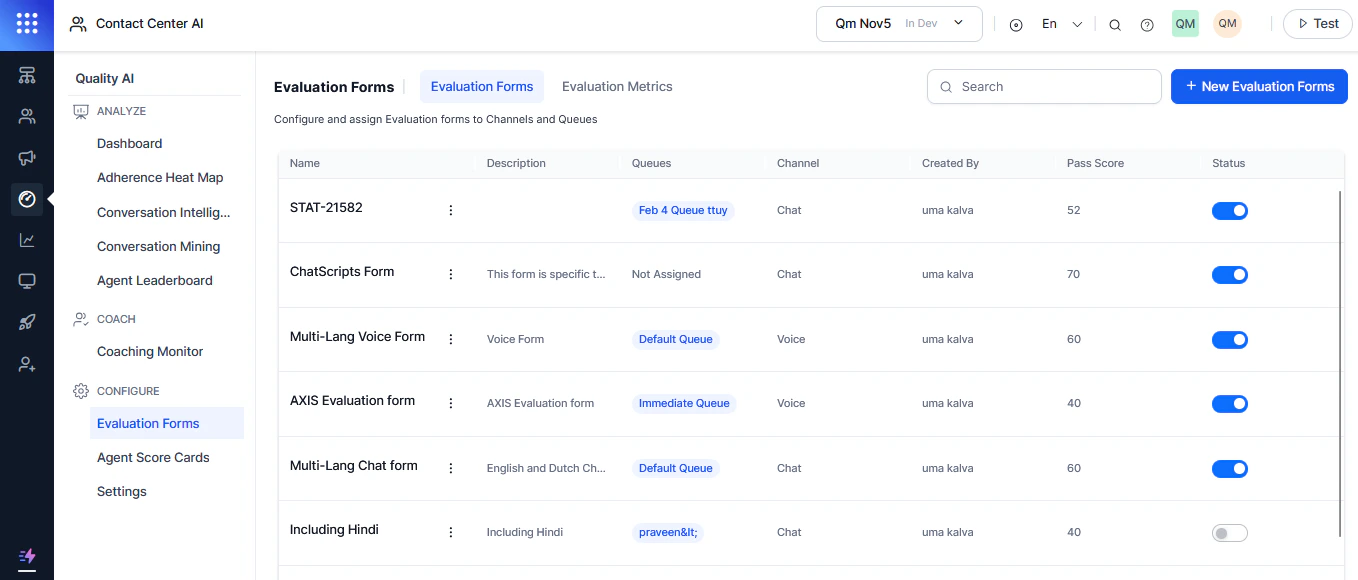

Auto QA

Auto QA enables automated evaluation using configured forms. When disabled, automated QA scores, Conversation Mining, Dashboards, and Evaluation Forms are hidden.Enable Auto QA

-

Expand Quality AI General Settings.

- Toggle on Auto QA.

- Select Save.

- Dashboards (Fail Statistics, Performance Monitor)

- Adherence Heatmap

- Conversation Mining

- Agent Leaderboard

- Coaching Monitor

- Evaluation Forms and Metrics

Only administrators can enable Auto QA. It is off by default. Disabling Auto QA hides Agent Scorecards and bookmarks, regardless of user permissions. Auto QA operates independently of Conversation Intelligence — you can enable either one without the other.

Disable Auto QA

- Toggle off Auto QA.

- Select Confirm, then Save.

Disabling Auto QA turns off automated scoring across the entire app and all queues.

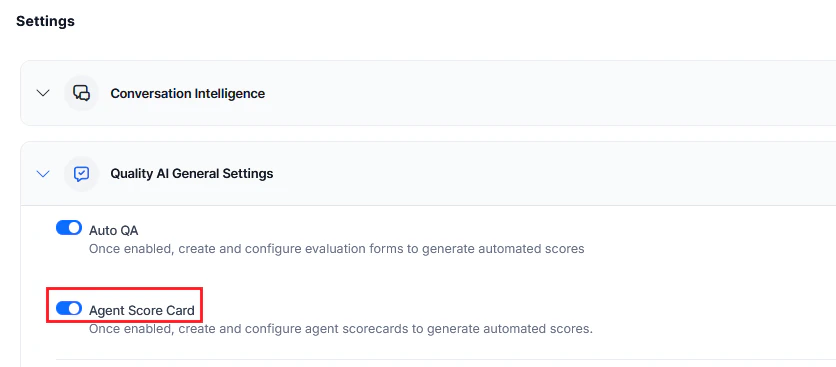

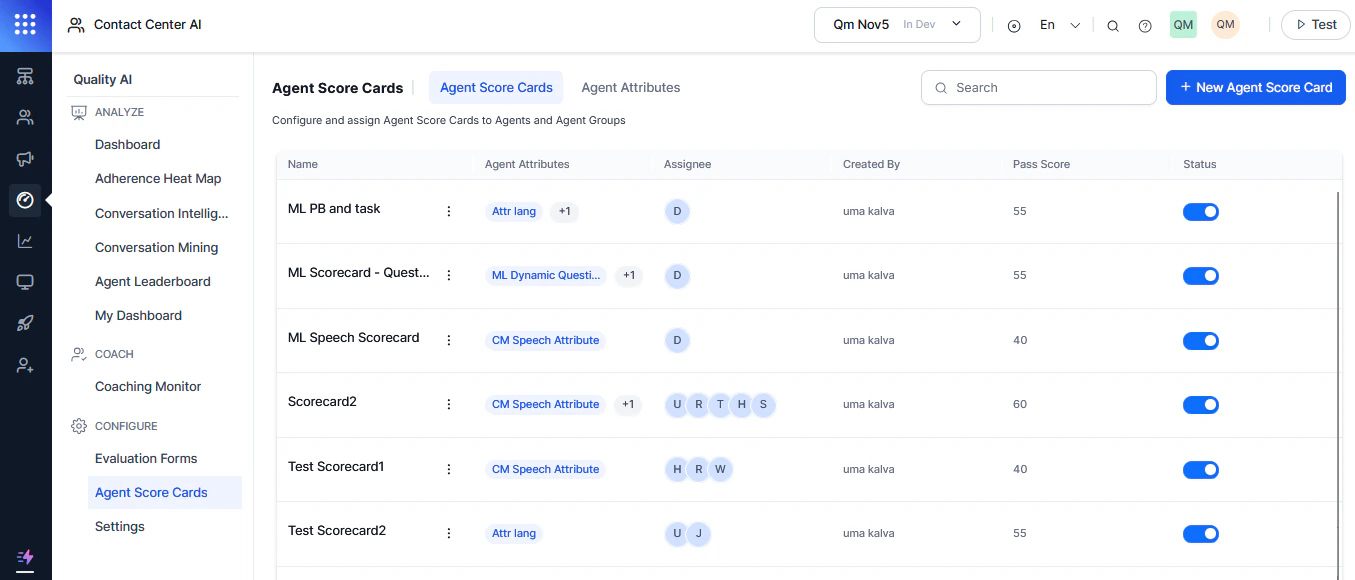

Agent Score Card

Agent Scorecards enable scoring at the agent level using evaluation forms.Enable Agent Score Card

-

Expand Quality AI General Settings.

- Toggle on Agent Score Card.

- Select Save.

Disable Agent Score Card

- Toggle off Agent Score Card.

- Select Save.

Disabling this feature disables automated agent scoring across the application and all queues.

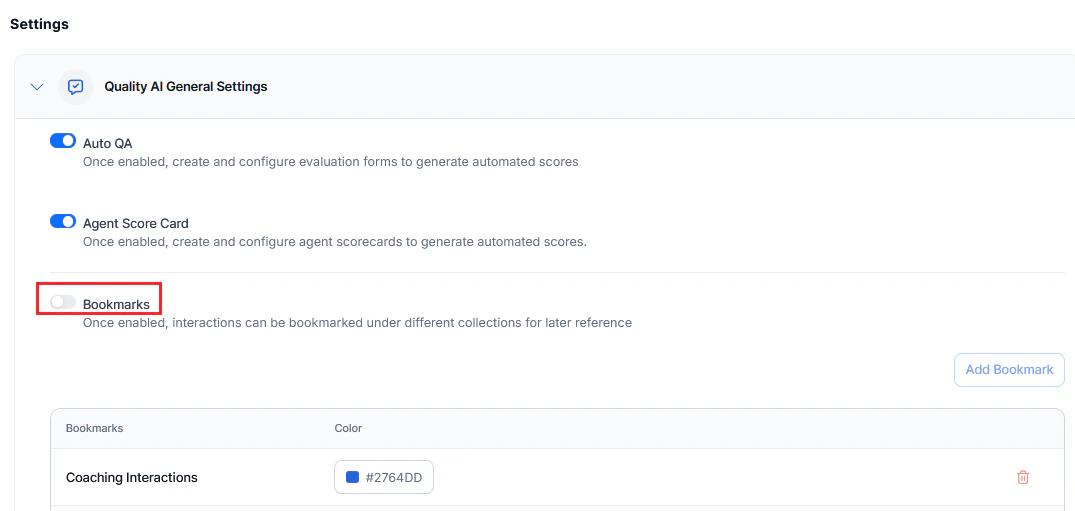

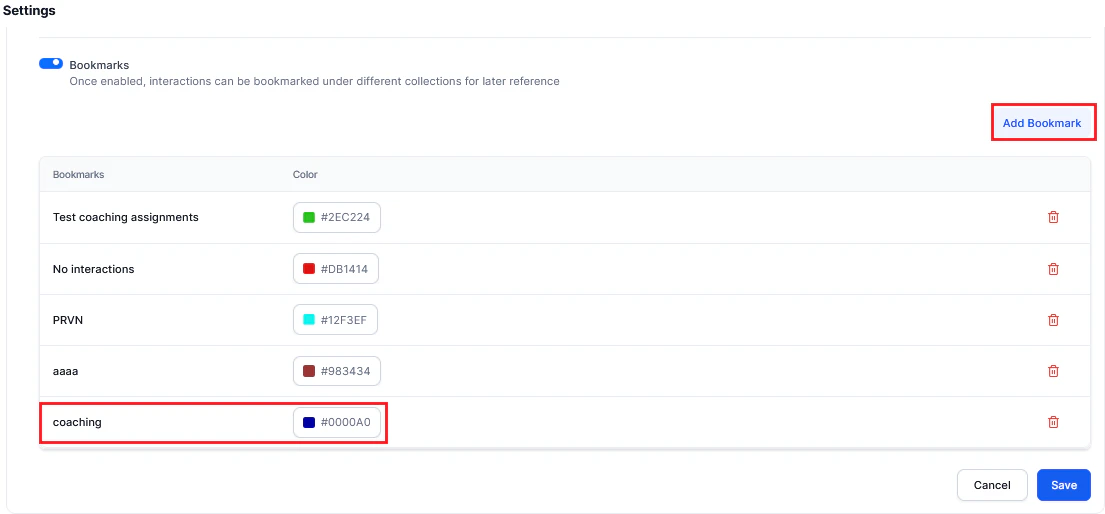

Bookmarks

Bookmarks let you tag interactions (calls, messages) for easy reference in Conversation Mining and dashboards.Enable Bookmarks

-

Expand Quality AI General Settings.

- Toggle on Bookmarks. This makes bookmarks available in Conversation Mining (Interactions) and Dashboard > Agent Leaderboard (Evaluation).

-

Select Add Bookmark.

- Enter a Bookmark name.

- Select a Color.

- Select Save.

Disable Bookmarks

- Toggle off Bookmarks.

- Select Confirm, then Save.

Deleting a bookmark removes only the tag, not the associated interactions.

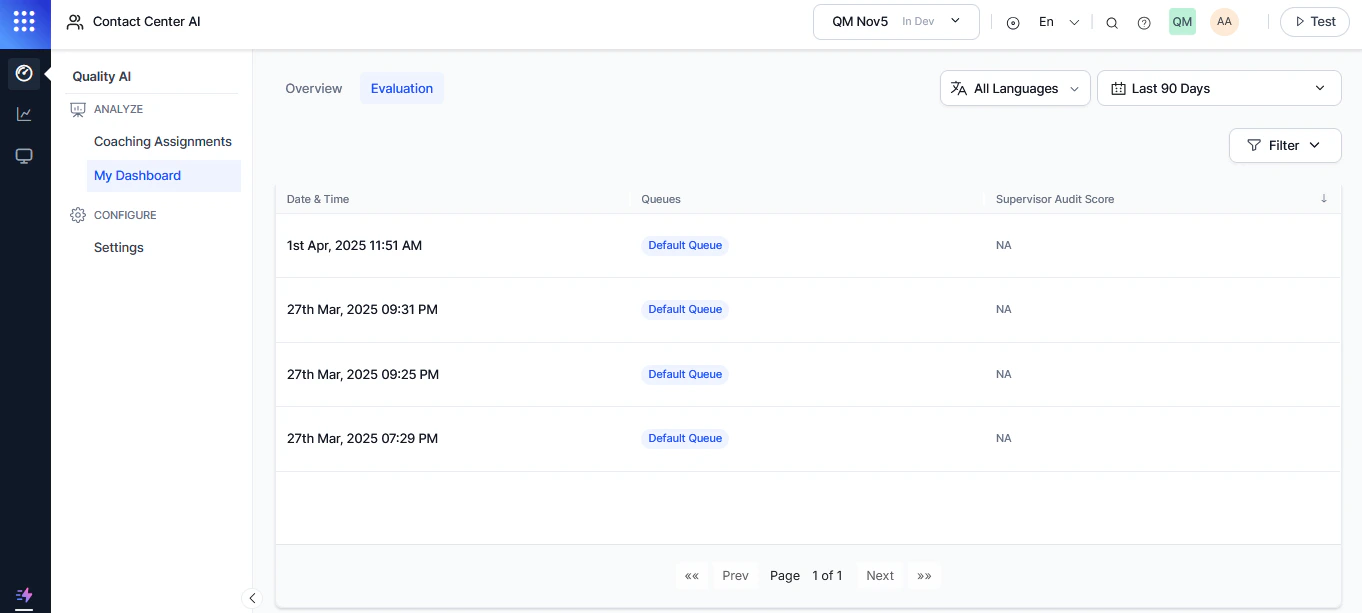

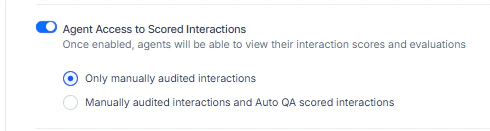

Agent Access to Scored Interactions

Controls which scored interactions agents can view to improve their performance. Off by default. When enabled, an Evaluation tab appears on the agent dashboard next to the Overview tab. Access via My Dashboard > Overview > Evaluation.

| Option | Description |

|---|---|

| Only manually audited interactions | Agents see only supervisor-audited interactions |

| Manually audited and Auto QA scored | Agents see both Auto QA-scored and supervisor-audited interactions |

Agent Dashboard Insights

Controls whether agents can view Sentiment and Resolution metrics on their own dashboard.| State | What agents see |

|---|---|

| Disabled (default) | Standard dashboard only: coaching assignments, scorecards, and performance data |

| Enabled | Additional insights: sentiment trends, topic-level sentiment, resolution rates, and drill-down metrics |

AI Justification and Evidence

When enabled, agents can view AI-generated justifications and supporting evidence for each question and metric. Details are shown by question, value, and AI Agent metric type so agents understand how scores are calculated.Audit Settings

Controls agent visibility and privacy during audits:| Setting | Description |

|---|---|

| Allow agents to view AI-generated emotions and sentiment | Agents can view emotional indicators and sentiment scores per interaction. When off, agents can’t access this data in the audit view. |

| Hide Auditor Details for Agent | Hides auditor identity from evaluated agents. When active, the system displays Anonymous instead of the auditor’s name. |

Manual Audit

Supervisors can enable additional metric types for comprehensive manual quality evaluations.Audit Speech Metrics

Provides speech analysis capabilities for quality assurance:- If By Speech is on, auditors enter responses for each speech metric (clarity, tone, pace).

Audit Playbook Metrics

Evaluates agent adherence to defined conversation playbooks:- If By Step is on, auditors input responses step-by-step.

- If Entire Playbook is on, auditors use a consolidated evaluation interface.