Documentation Index

Fetch the complete documentation index at: https://koreai.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

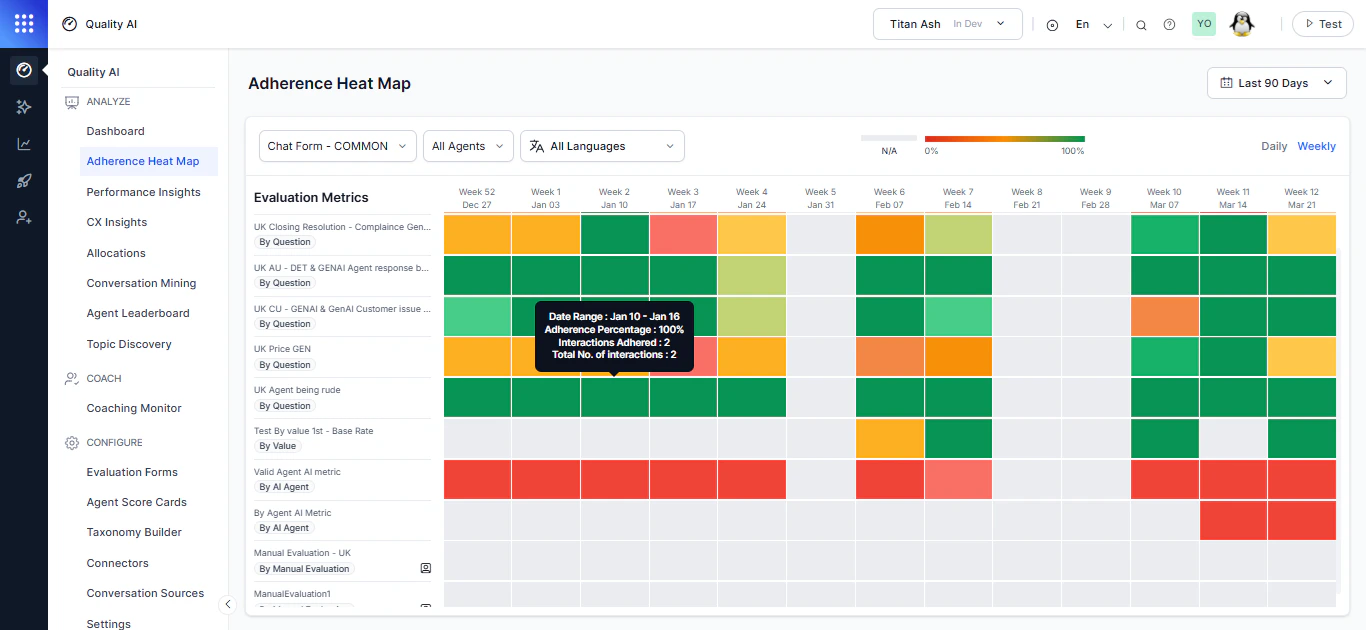

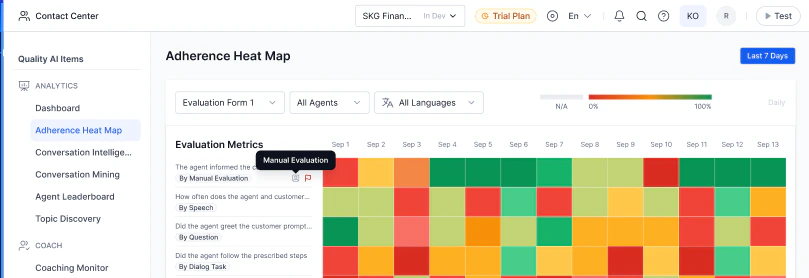

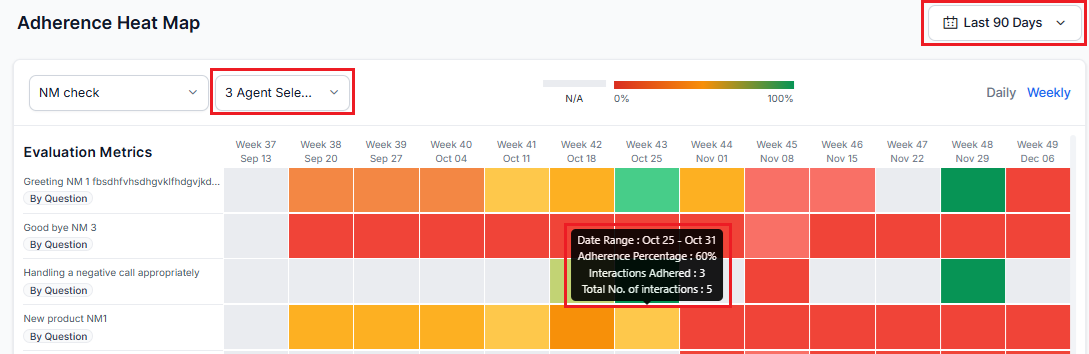

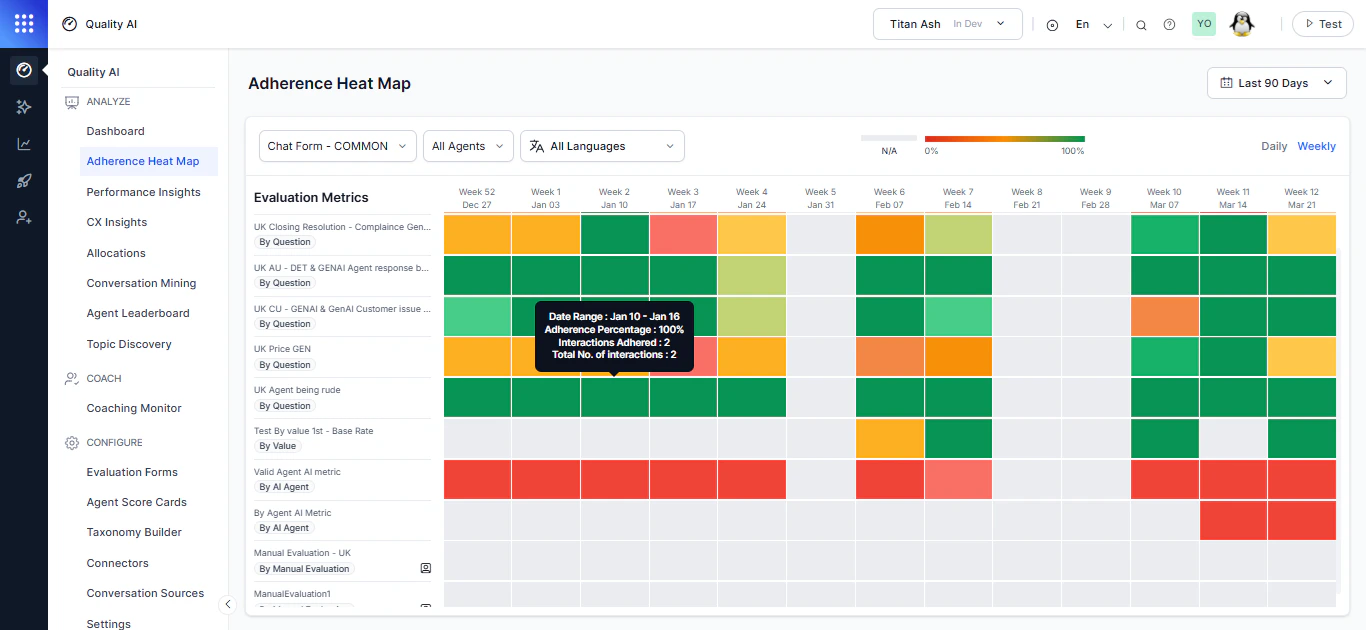

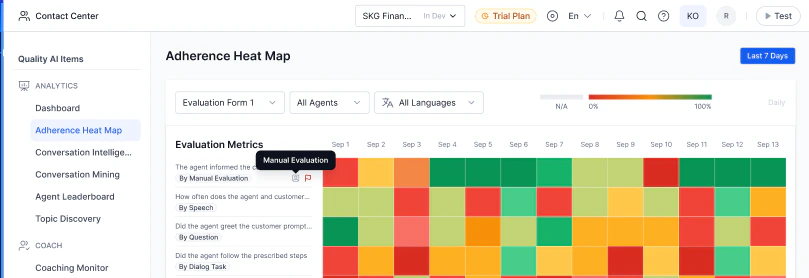

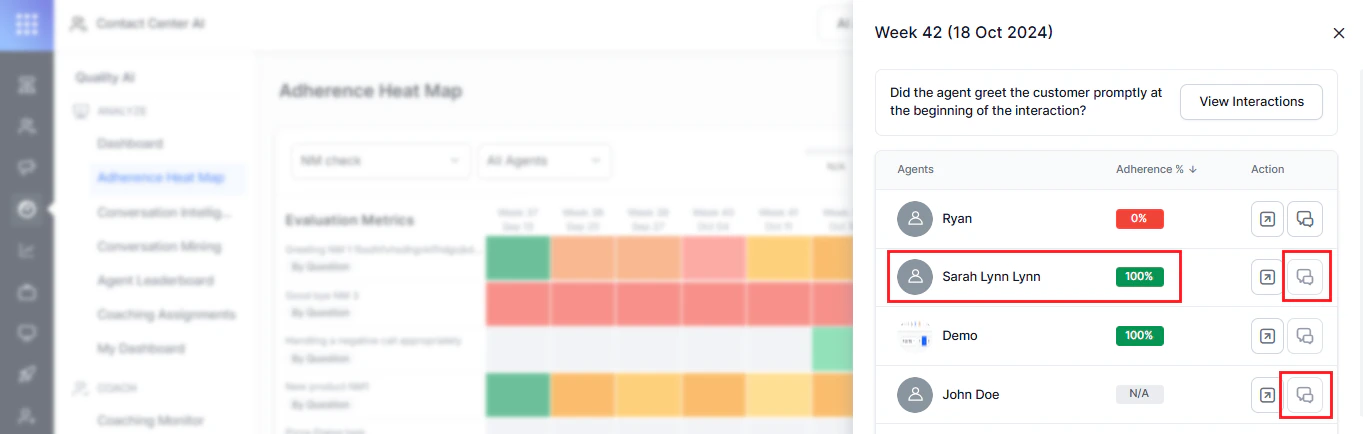

The Adherence Heatmap helps supervisors track how consistently agents meet evaluation metrics over time, providing a visual view of trends across evaluation forms, metrics, queues, agents, contact direction (inbound or outbound), date ranges, and languages. It covers evaluation forms, metrics, queues, agents, languages, date ranges, and interaction direction (inbound or outbound).

It enables you to identify recurring quality gaps, compare performance across interaction types, monitor compliance trends, and support data-driven coaching decisions. Users with Cross Queue Data Access can view data across all queues, while others are limited to their assigned queues.

By default, the heatmap includes only eligible evaluated interactions in adherence calculations. It excludes interactions marked Below Threshold or Duration Unavailable unless you manually evaluate them. A contact may fall below the threshold for one scorecard but remain eligible for another, depending on scorecard-specific duration rules.

Key Capabilities

| Use Case | How It Helps |

|---|

| Trend Monitoring | Tracks adherence trends across time and evaluation metrics. |

| Compliance Analysis | Identifies recurring failed and fatal quality metrics. |

| Directional Insights | Analyzes inbound, outbound, or combined interactions separately. |

| Agent Coaching | Highlights low-performing agents and metrics for targeted coaching. |

| Interaction Review | Opens failed interactions directly in Conversation Mining via the Duration Status filter. |

| Scorecard-based Duration Rules | Applies scorecard-specific duration thresholds to determine interaction eligibility. |

| Manual Override | Includes flagged interactions in adherence metrics after manual evaluation. |

Access Adherence Heatmap

Navigate to Quality AI > Analyze > Adherence Heatmap.

Select an evaluation form from the dropdown to view adherence metrics.

Select an evaluation form from the dropdown to view adherence metrics.

Enable Auto QA in Settings > Quality AI General Settings to access this feature.

Adherence Percentage Calculation

The system calculates adherence using only evaluated interactions that are eligible for aggregation, based on the selected evaluation form, agent, and date range.

Adherence % = Adhered evaluated interactions ÷ Applicable evaluated interactions

Handling Flagged Interactions

- By default, Below Threshold and Duration Unavailable interactions are excluded from adherence metrics and dashboard aggregates unless manually evaluated.

- A contact can fall Below the Threshold for one scorecard but meet evaluation criteria for another.

- Duration checks don’t block ingestion, transcript storage, or Conversation Mining.

- Includes flagged contacts in heatmap metrics and treats them as standard post manual evaluation.

- Flagged interactions (All, Evaluated Only, Below Threshold, Duration Unavailable) remain available in Conversation Mining through the Duration Status filter.

- Flagged interactions remain available in Conversation Mining via the Duration Status filter (All, Evaluated Only, Below Threshold, Duration Unavailable), with drill-down and exclusion reasons shown in interaction details.

Handling Flagged Interactions

By default, interactions marked Below Threshold or Duration Unavailable are excluded from adherence metrics and dashboard aggregates unless manually evaluated. After manual evaluation, the system includes these interactions in heatmap metrics and treats them as standard evaluated interactions.

A contact may fall below the threshold for one scorecard but still qualify for evaluation under another based on scorecard-specific duration rules. Duration checks don’t block ingestion, transcript storage, or Conversation Mining.

Flagged interactions remain available in Conversation Mining through the Duration Status filter (All, Evaluated Only, Below Threshold, and Duration Unavailable). The system also displays exclusion reasons and supports consistent drill-down behavior in interaction details.

Interaction Eligibility Rules

| Interaction Type | Included in Heatmap |

|---|

| Evaluated | Yes |

| Below Threshold | No |

| Duration Unavailable | No |

| Manually Evaluated Flagged Interaction | Yes |

Metric Applicability

| Metric Type | When It Applies |

|---|

| Static (by question) | Apply to all evaluated interactions that are eligible for aggregation within the selected evaluation form and queue. |

| Dynamic (by question) | Apply only when the defined trigger condition is detected. |

Color Coding

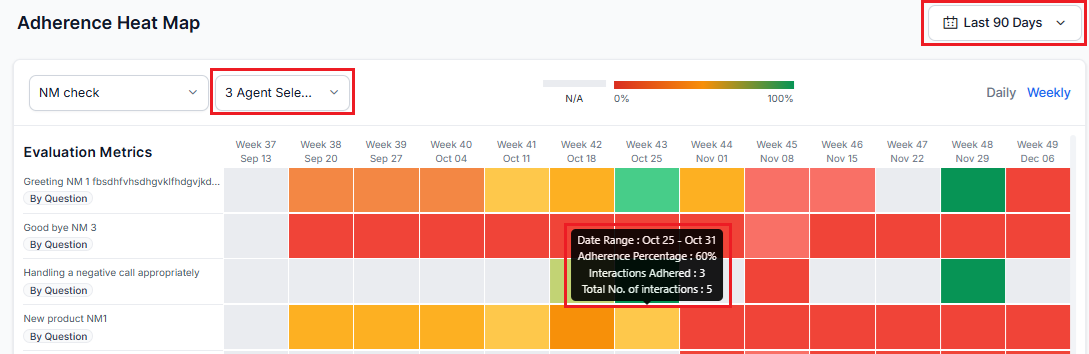

Each heatmap tile is color-coded by adherence percentage. Red indicates 0% adherence, green indicates 100% adherence, and intermediate values display in 10% increments between red and green. The system displays gray when no eligible evaluated interactions match the selected filters.

Failed and Fatal Interactions

| Category | Description |

|---|

| Failed | Interactions that don’t meet quality or compliance thresholds (for example, missed greetings, incorrect information, or compliance breaches). |

| Fatal Errors | Critical issues that automatically set adherence to 0% when a fatal error occurs (red flag). |

| Usage for Coaching | Supervisors and agents use fatal error flags, heatmaps, and question-level feedback for self-assessment and coaching preparation. See AI-Assisted Manual Audit for additional workflows. |

Filters

This helps supervisors to drill down into agent performance based on selected criteria.

Date Range

Use the Date Range filter to view interaction data for a selected time period. By default, the system displays data for the last 7 days and uses the user’s system time zone.

You can choose predefined ranges such as Yesterday, Last 7 Days, Last 28 Days, and Last 90 Days, or select a Custom Range of up to 31 days. Custom ranges display data from 12:00 AM to 11:59 PM in the user’s time zone.

Manual Evaluation Metrics Indicator

Displays Manual Evaluation metrics with a visual identifier and represents unaudited conversations when no data is available in the Adherence Heatmap.

Select an evaluation form from the dropdown to view adherence metrics. The selected form determines the available metrics, queues, languages, and agents displayed in the Heatmap.

You can set a default evaluation form across your assigned queues. The system saves this selection across sessions on the Heatmap page and QA dashboard. When you update the default form, the system refreshes the Heatmap context, including fatal metric highlighting.

Interaction Directions

Analyze adherence by interaction direction—Inbound (customer-initiated), Outbound (agent-initiated), or All Directions (combined). All Directions are the default selection and aggregate adherence totals and percentages across all eligible interactions.

Agent

Supports multi-select. By default, all agents in the selected queues are shown. You can search and select agents to view adherence for their interactions; only relevant tiles remain active, while others appear disabled.

Language

Supports multi-select from the dropdown based on languages configured in the evaluation form. When applied, only metrics for the selected languages are displayed.

Language options depend on the selected evaluation form.

Heatmap Interactions

Adherence Heatmap Overview

The heatmap interface tracks agent adherence across selected metrics over a chosen date range, based on filters such as agents, evaluation form, and interaction status. Each tile represents a metric and displays interaction data for the selected period.

Adherence Display

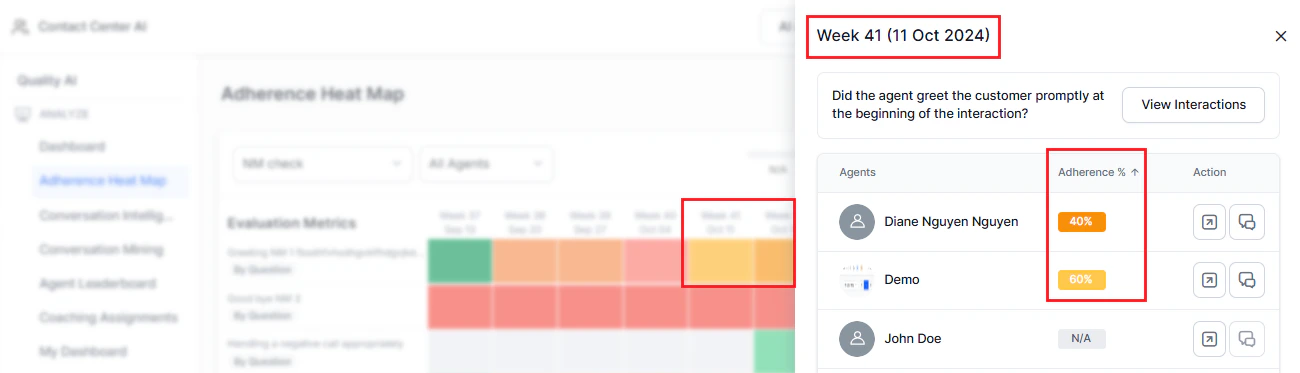

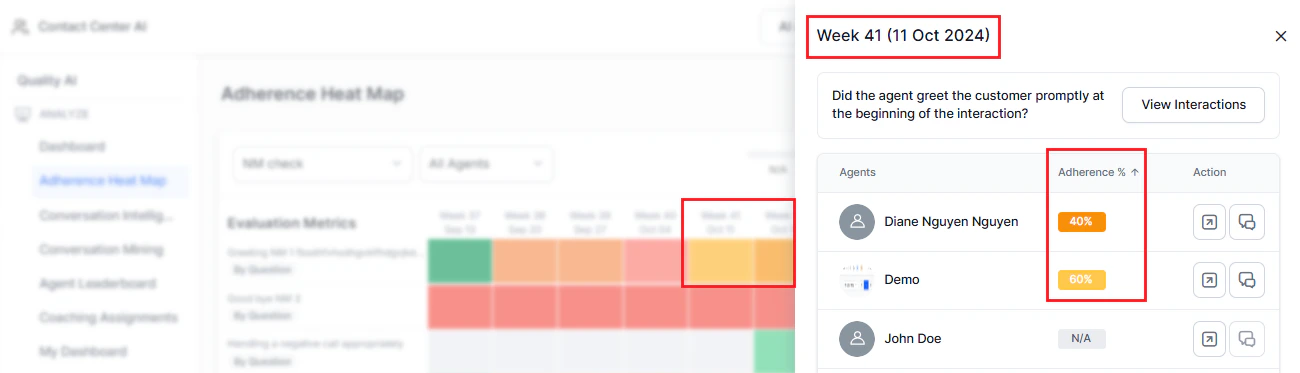

Sort the adherence column by percentage; by default, results are shown from lowest to highest adherence.

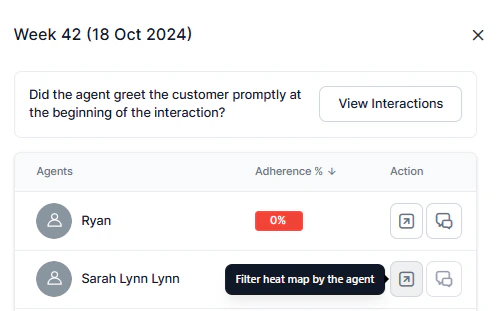

Agent-Level Breakdown

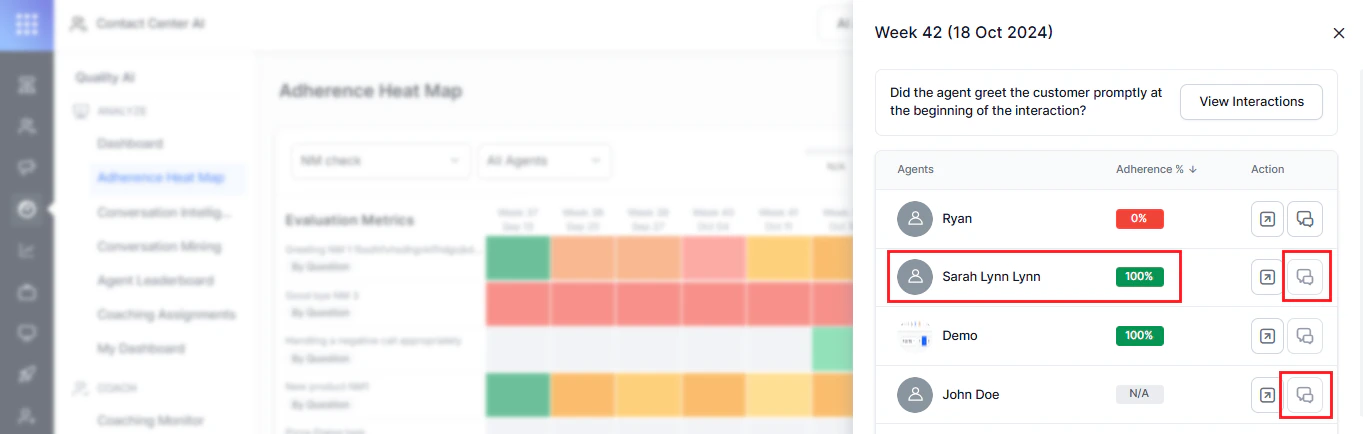

Select a date range tile to view an agent-wise breakdown for the selected metric, ordered from least to most adherence, with adherence percentages displayed.

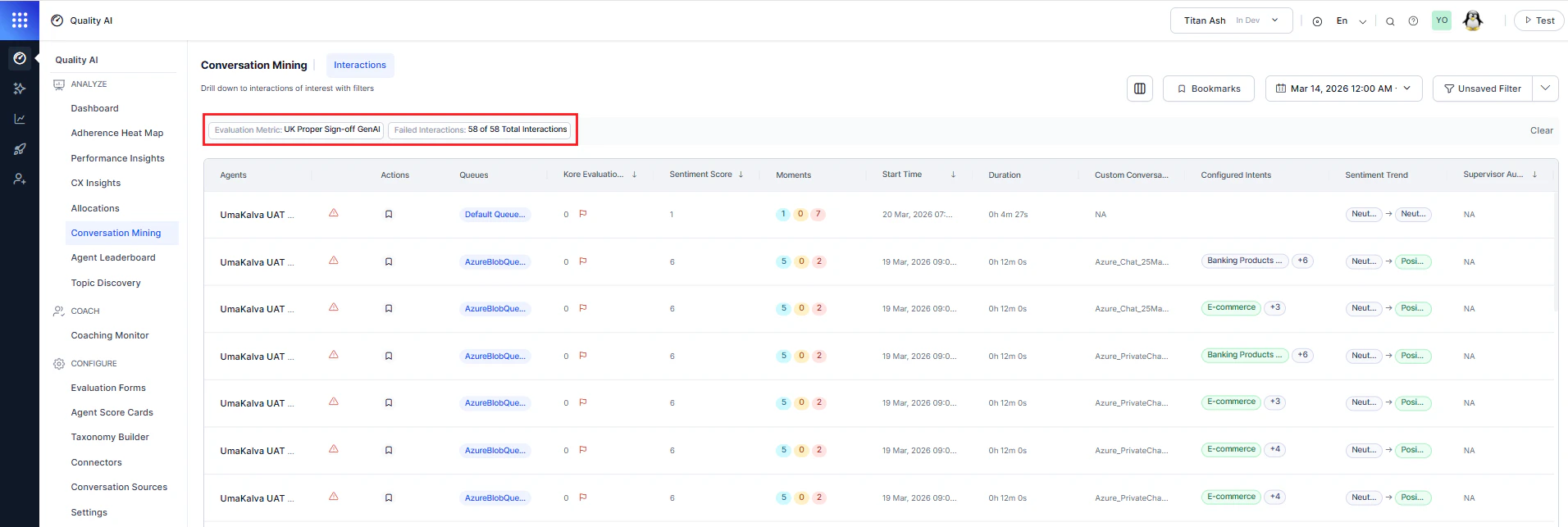

View Interactions

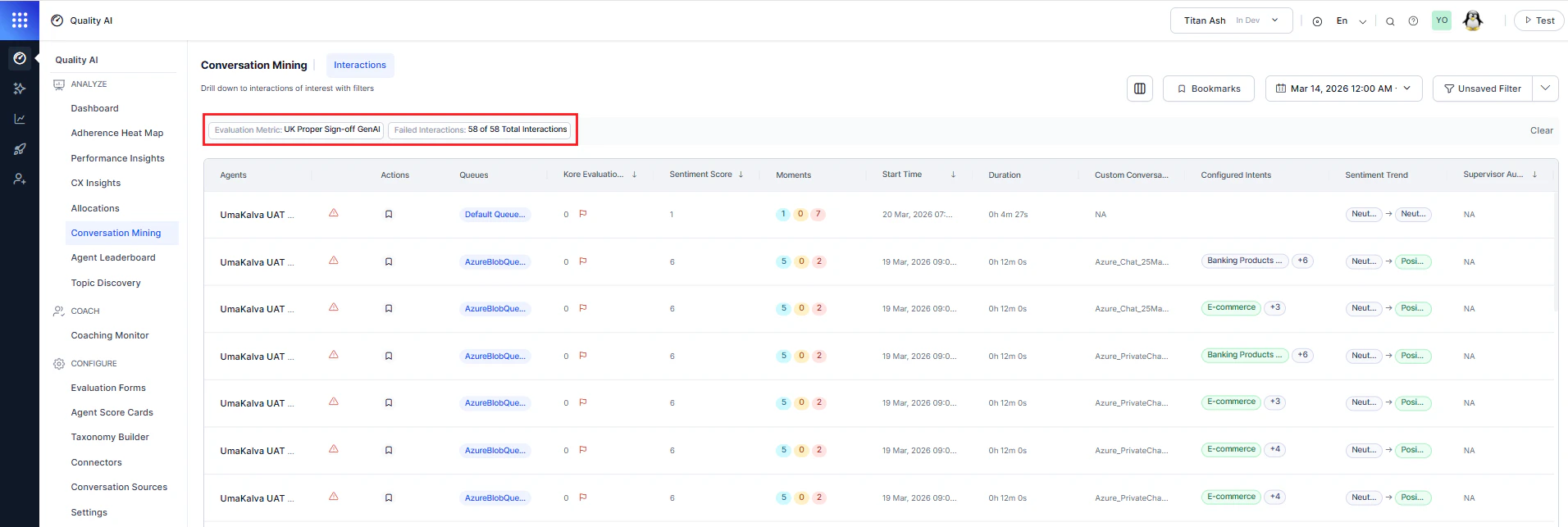

Opens the Conversation Mining page with failed interactions for all agents. The applied filters (displayed as Unsaved) include the evaluation metric name and metric qualification (pass or fail count).

Interaction Display Behavior

Interaction Display Rules:

Agents with no applicable interactions display at the bottom of the list.

Tiles display No Interactions for N/A cases and remain gray when there are no eligible interactions, while agents with 100% adherence show No Failed Interactions. The View Interactions option is disabled for agents with no applicable interactions or 100% adherence.

Tiles display No Interactions for N/A cases and remain gray when there are no eligible interactions, while agents with 100% adherence show No Failed Interactions. The View Interactions option is disabled for agents with no applicable interactions or 100% adherence.

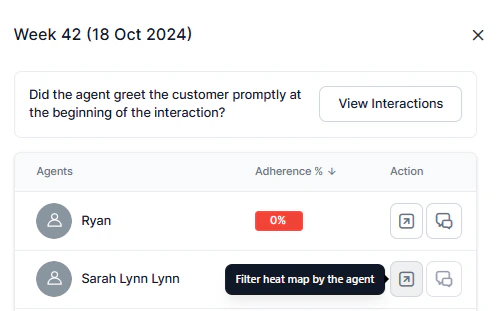

Action Filters

| Action | Description |

|---|

| Filter Heatmap by Agent | Selecting the icon navigates to the agent’s heatmap metrics page for the selected date range. |

| View Failed Interactions | Selecting the agent interaction icon opens the Interactions page in Conversation Mining for that agent’s failed interactions. |

Dashboard View

Displays a simplified, non-interactive Adherence Heatmap for the last 7 days using the default evaluation form. It includes only evaluated interactions that are eligible for aggregation. Interactions marked Below Threshold or Duration Unavailable are excluded by default unless manually evaluated. Excluded interactions remain available in Conversation Mining and Reports.

For details about the dashboard experience and available widgets, see Supervisor Dashboard.

View Interactions

Select View Interaction to open Conversation Mining with metric-based filters and notification tags. The system excludes Below Threshold and Duration Unavailable interactions from heatmap calculations by default unless they are manually evaluated. Notification tags appear only when navigating from the Adherence Heatmap.