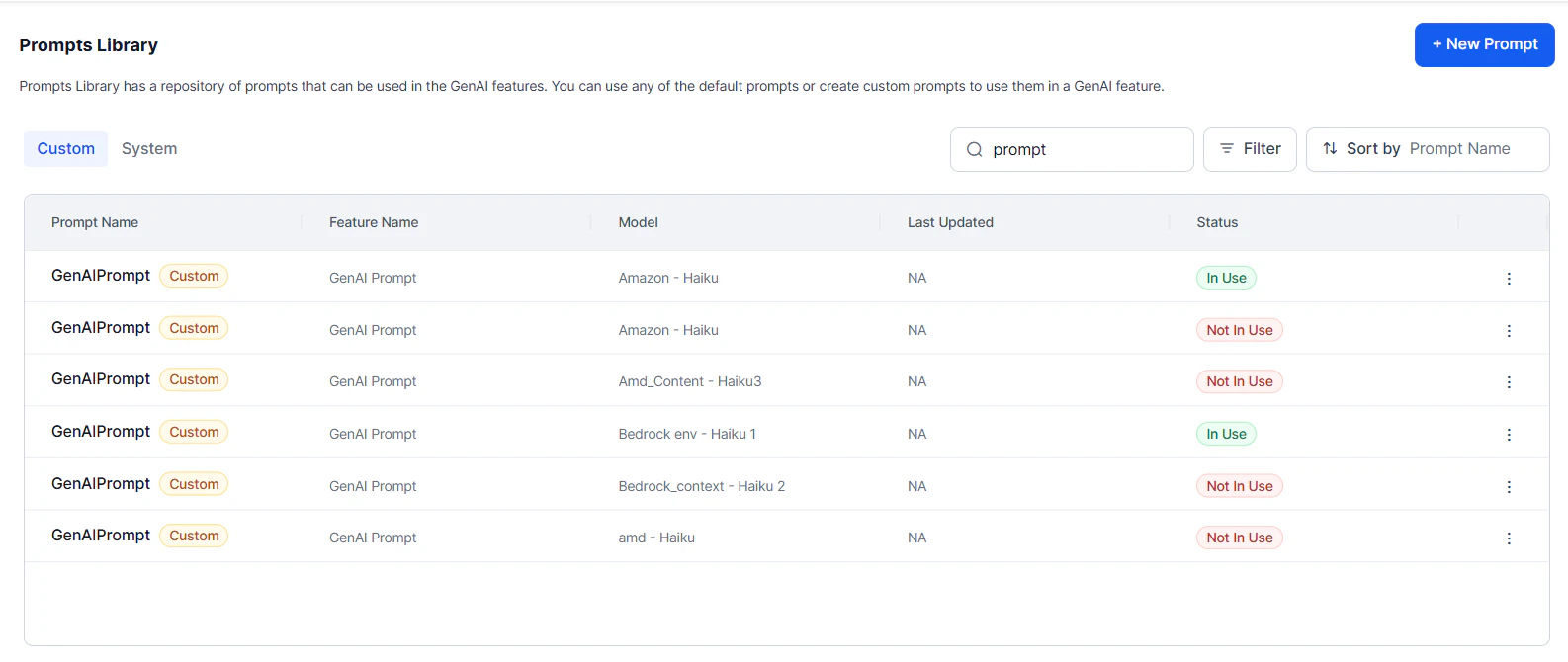

The Prompts Library lets you create, test, and manage prompt templates that power your AI Agent’s generative AI features. It lists all system and custom prompts with their usage status (“In Use” / “Not In Use” for custom prompts). The post-processor aligns LLM responses with Platform expectations by refining outputs at runtime. It’s available for both default and custom prompts.Documentation Index

Fetch the complete documentation index at: https://koreai.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Reference Documents

| Document | Description |

|---|---|

| Custom Prompts | Step-by-step guide for adding custom prompts. |

| Streaming Responses | Enable and configure real-time incremental LLM output for Agent Node and Prompt Node. |

| LLM Response Format | Reference JSON formats for regular, streaming, and tool-calling responses from OpenAI and Azure OpenAI. |

Search and Filter

Use the Quick Filter to filter by prompt type (system or custom). Use smart search to find prompts by name, feature, or model. Apply filters by type, label, status, model, or feature name. Sort by any selected criteria.

More Options

The More Options menu next to each prompt provides the following actions:| Action | Description |

|---|---|

| View Associations | See all nodes and features where the prompt is applied. |

| Edit | Modify custom prompts. |

| Delete | Permanently delete unused custom prompts. “In Use” and system prompts can’t be deleted. |

| Advanced Configuration | For system prompts, adjust temperature and token limit. |

Prompt Types

Default Prompts

Ready-to-use templates for pre-built models (OpenAI, Azure OpenAI). Each template targets a specific feature and model.- Cannot be edited directly.

- To customize, import a default prompt and save it as a custom prompt for any pre-built or custom LLM.

Custom Prompts

Full control over prompt design for any pre-built or custom LLM. Build from scratch or import and modify existing prompts to match your tone, context, and business requirements.Custom LLM integration and prompt creation are available in English only.

Regular vs. Streaming Prompts

Regular prompts generate a complete response after processing the full input—suited for summaries, reports, and structured output. Streaming prompts deliver responses incrementally in real time. Streaming prompts are tagged with “streaming” in the Prompts Library. See Streaming Responses for setup and benchmarks.Comparison

| Feature | Regular | Streaming |

|---|---|---|

| Response Delivery | Full response at once | Tokens delivered incrementally |

| Parameter Requirements | Standard | Requires "stream": true |

| Exit Scenarios | Supported | Not supported |

| AI Agent Response | Supported | Not supported |

| Collected Entities | Supported | Must be in streamed format |

| Tool Call Requests | Supported | Supported |

| Post-Processing | Available | Not available |

| Guardrails | Supported | Not supported |

When to Use Each

Use Regular when:- Post-processing or content moderation is required.

- Tool calls are needed for Agent Node.

- BotKit response interception is needed.

- Full response validation must happen before delivery.

- Real-time interaction is critical.

- Responses are expected to be long.

- Voice-based applications need incremental speech.

- Immediate feedback improves user experience.

Implementation Notes

- Both types require responses to include

conv_status, AI Agent response, and collected entities. Streaming prompts must structure this content for incremental delivery. - Regular prompts can be fully validated before delivery. Streaming prompts require careful prompt engineering—corrections can’t be made mid-stream.

- Streaming responses include additional analytics: TTFT (Time to First Token) and Response Duration (first chunk to last chunk).