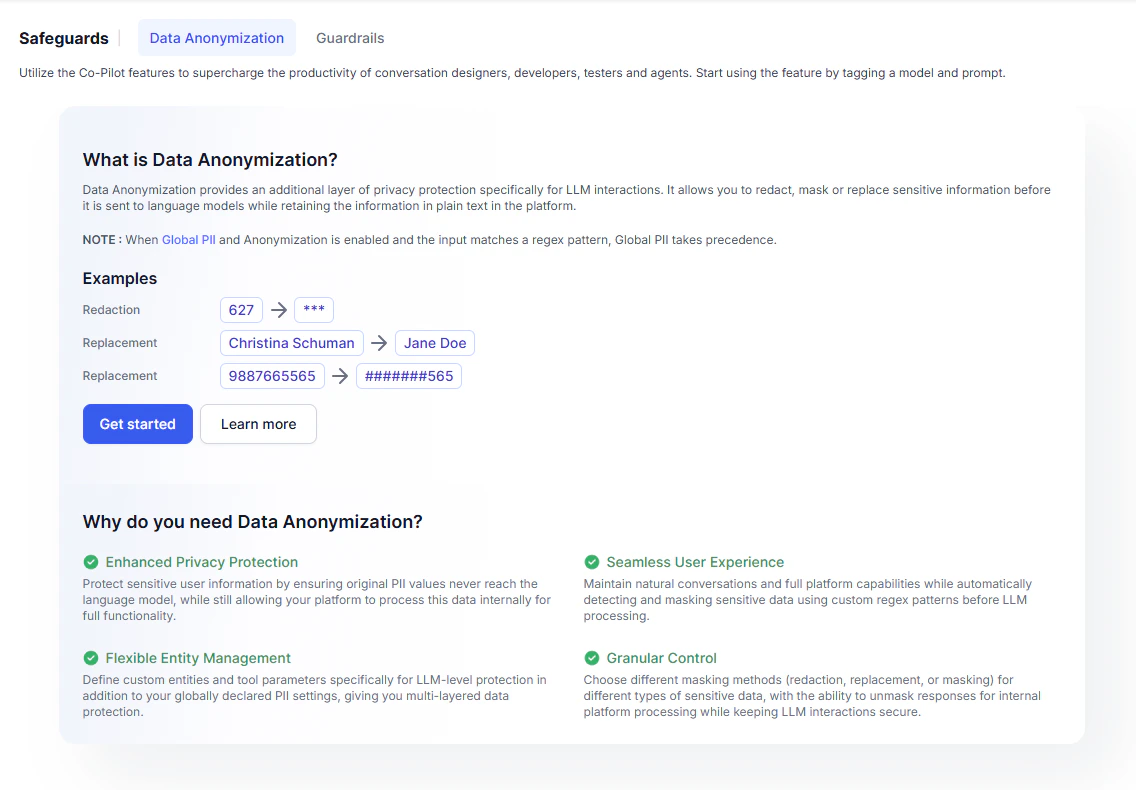

Mask PII and sensitive data in user input before it reaches the LLM, while preserving full end-to-end functionality.Documentation Index

Fetch the complete documentation index at: https://koreai.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Personally identifiable information (PII) includes any data that can identify, contact, or locate an individual—such as Social Security Numbers, email addresses, credit card numbers, CVV codes, passport numbers, and home addresses. The Platform detects sensitive data using regex patterns you define, anonymizes it before sending it to the LLM, and restores the original values after the LLM responds. Conversation history and debug logs always show the original data. LLM-layer anonymization works alongside globally declared PII. Global settings take precedence.Anonymization Methods

| Method | Description |

|---|---|

| Redaction | Replaces the value with a placeholder that hides it entirely. |

| Replacement | Substitutes the value with a predefined string. |

| Mask with Character | Conceals part of the value while preserving a recognizable format—for example, showing only the last four digits of a card number. |

Supported Features

Data anonymization applies during LLM interactions for the following features:- Agent Node

- Conversation Summary

- DialogGPT

- Disposition Prediction for Agent Wrap-Up

- Prompt Node

- Rephrase Response

- Sentiment Analysis

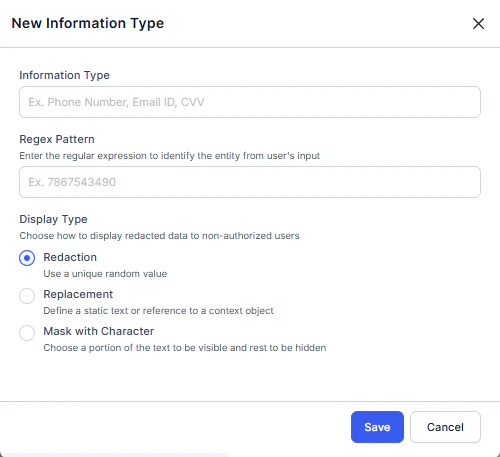

Configure Data Anonymization

- Go to Generative AI Tools > Safeguards > Data Anonymization.

-

Click Get Started or + New Field.

-

Enter the Information Type and Regex Pattern, then select the Display Type.

Define patterns as substrings, not exact values. The Platform scans the entire request payload—not just user input. Use a regex that matches the target value within a broader context. For example, use

(?<!\d)890839(?!\d)instead of^890839$to redact890839. - Click Save.

Prompt Guidance for Smaller Models

For smaller LLMs, add explicit instructions in the system prompt to prevent the model from modifying redacted values. If the model alters a redacted value, the Platform cannot restore the original, which breaks end-to-end functionality. The Platform wraps redacted values with##. Use this marker in your prompt instructions.

Example 1 — Masked value: