Back to XO GPT ModuleDocumentation Index

Fetch the complete documentation index at: https://koreai.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

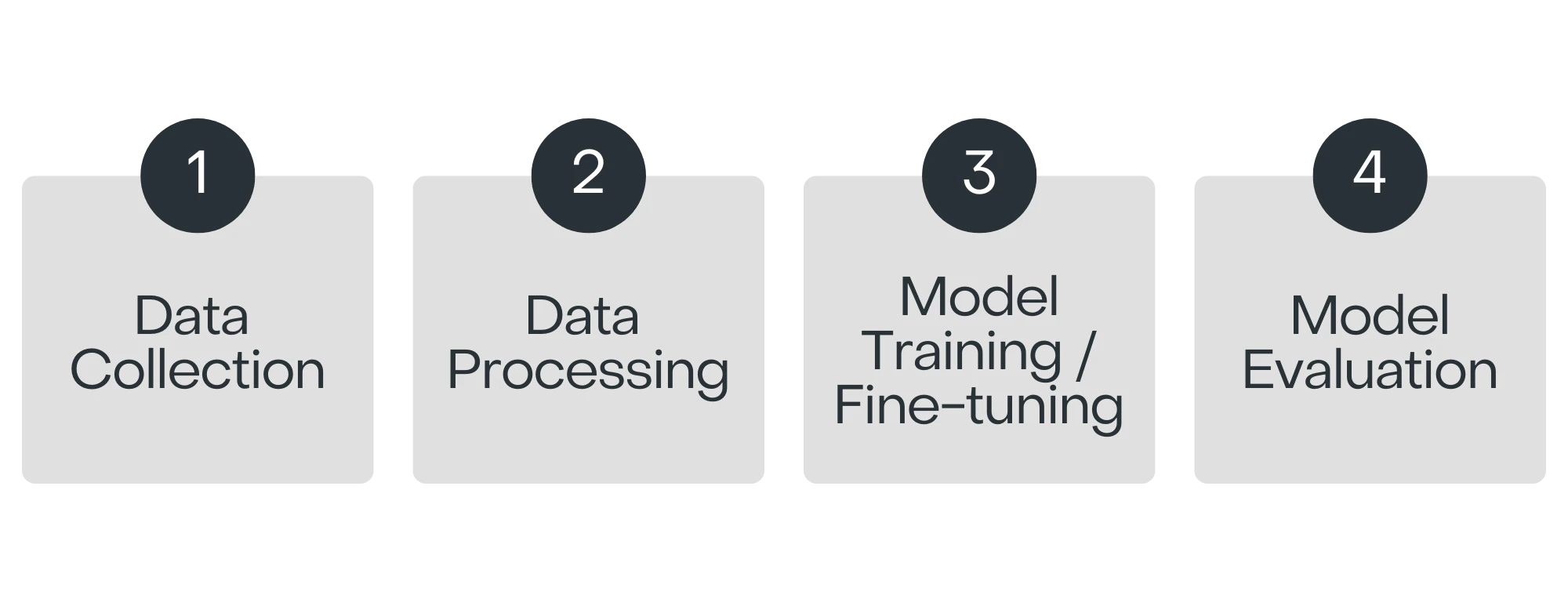

Model Building Process

Describes the end-to-end model building process, including data collection, processing, and evaluation.

Data Collection

Training data is gathered from conversations across multiple domains—healthcare, banking, e-commerce, IT support, finance, and more. Training data sources:- Synthetic data generated using Azure OpenAI GPT-4.

- Human experts manually develop data based on the problem, challenges, and expected outcomes.

- A separate team of human annotators evaluates the data for relevancy, scenario coverage, and correctness.

- No customer data is used for training or evaluation.

Data Processing

Raw data is cleaned to remove irrelevant content, standardize formats, and prepare text for tokenization and normalization. This ensures compatibility with the base model’s requirements. Fine-tuning techniques used:| Technique | Description |

|---|---|

| Memory Efficiency | 4-bit precision loading and double quantization reduce memory usage while maintaining accuracy. |

| Low-Rank Adaptation (LoRA) | Applied to specific model layers; parameters such as rank, scaling factor, and dropout are tuned to minimize overfitting. |

| Optimized Training Parameters | Carefully selected learning rate, batch size, and epochs balance training efficiency with performance. |

| Advanced Optimization | State-of-the-art optimizer with optional warm-up steps, early stopping, and LR scheduling. |

| Task-Specific Adaptation | Models are fine-tuned for causal language modeling to target the specific conversational task. |

Model Evaluation

Metrics: Accuracy, fluency, hallucinations, robustness, AI safety, and bias. Validation techniques: Cross-validation and hold-out validation to ensure generalization to unseen data. Evaluation data: Synthetic data from GPT models and human experts, covering diverse topics and challenging inputs (typos, poor grammar, profanity). Notes: Internal benchmarks are based on synthetic data and may not generalize to all scenarios. Real-world results may vary based on hardware, network, and implementation specifics.Model Benchmarks

For version-specific benchmarking details, see:- Answer Generation Model

- Conversation Summarization Model

- DialogGPT Model

- Response Rephrasing Model

- User Query Paraphrasing Model

Model Roadmap

Maintenance

The model is reviewed, updated, and retrained regularly. Bug fixes and performance improvements are addressed on an ongoing basis; new features are added quarterly.Expansion

- Multilingual Proficiency — New languages beyond English, French, Spanish, Japanese, Turkish, and German, refined through expert feedback.

- New Summary Templates — Custom templates such as Stepwise and PRA (Problem-Resolution-Action), developed on demand.

FOR EVALUATION PURPOSES ONLY This document contains proprietary information of Kore.ai Inc. and is provided exclusively for evaluation. It does not grant any licenses, rights, or permissions regarding intellectual property. DISCLAIMER: XO GPT is an advanced AI model that may require improvements over time. Outputs may occasionally be unpredictable, inaccurate, biased, or unexpected. Thoroughly test the model and adjust it for your specific use cases.